InfoQ Homepage AI, ML & Data Engineering Content on InfoQ

-

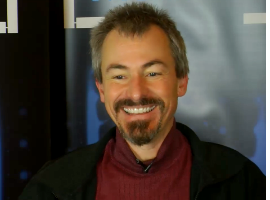

Nathan Marz on Storm, Immutability in the Lambda Architecture, Clojure

Nathan Marz explains the ideas behind the Lambda Architecture and how it combines the strengths of both batch and realtime processing as well as immutability. Also: Storm, Clojure, and much more.

-

Jeremy Pollack of Ancestry.com on Test-driven Development and More

Hadoop, the distributive file system and MapReduce are just a few of the topics covered in this interview recorded live at QCon San Francisco 2013. Industry-standard Agile implementation and a lot of testing, assures the development team at Ancestry.com that they have an app that can handle the large traffic demands of the popular genealogy site.

-

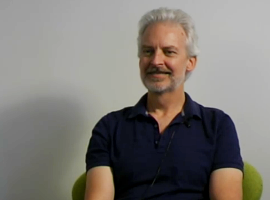

Cliff Click on In-Memory Processing, 0xdata H20, Efficient Low Latency Java and GCs

Cliff Click explains 0xdata's H20, a clustering and in-memory math and statistics solution (available for Hadoop and standalone), writing H20's memory representation and compression in Java, low latency Java vs GCs, and much more.

-

Dean Wampler on Scalding, NoSQL, Scala, Functional Programming and Big Data

Dean Wampler explains Scalding and the other Hadoop support libraries, the return of SQL, how (big) data is the killer application for functional programming, Java 8 vs Scala, and much more.

-

Machine Learning Netflix Style with Xavier Amatriain

Xavier Amatriain discusses how Netflix uses specialized roles, including that of the Data Scientist and Machine Learning Engineer, to deliver valuable data at the right time to Netflix' customer base through a mixture of offline, online, and nearline data processes. Xavier also discusses what it takes to become a Machine Learning Engineer and how to gain real experience in the field.

-

Eva Andreasson on Hadoop, the Hadoop Ecosystem, Impala

Eva Andreasson explains the various Hadoop technologies and how they interact, real-time queries with Impala, the Hadoop ecosystem including Hue, Oozie, YARN, and much more.

-

Big Data's Role in Etsy's Product Development

Etsy's approach to big data has been to give the entire organization visibility to different sources of data generated by their product as well as access to the experts who know how to use it. Nell Thomas explains her role at Etsy and how Etsy's view of big data has shaped its product's evolution.

-

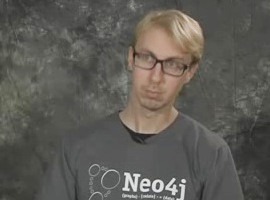

Emil Eifrem on NoSQL, Graph Databases, and Neo4j

Emil Eifrem looks back at the history of Neo4j, an open-source, NoSQL graph database supported by Neo Technology. He describes some real world applications of graphs, domain modelling with graphs, and compares the performance of graph and relational databases. He also examines how Neo4j differs from other NoSQL and graph databases in the market and describes various Neo4j licensing options.

-

The Larger Purpose of Big Data with Pavlo Baron

Big Data means more than just the size of a dataset. Pavlo Baron explains different ways of applying Big Data concepts in various situations: from analytics, to delivering content, to medical applications. His larger vision for Big Data ranges from specialized Data Scientists, to learning Decision Support Systems, to helping mankind itself.

-

Ian Robinson discusses Service Evolution and Neo4J Feature Design

Ian Robinson discusses Neo4J's design choices for data storage and retrieval, CRUD operations, transactions, graph traversal and searches and HA deployment strategies. He also shares his thoughts on hypermedia controls and the concept of consumer driven contracts for continuous evolution of services.

-

Michael Hunger on Spring Data Neo4j, Graph Databases, Cypher Query Language

In this interview, Michael Hunger talks about the evolution of persistence technologies over the last decade, the emergence of NoSQL databases, and looks at where graph databases fit in. He describes the goals behind the Spring Data Neo4j project, it's latest developments, and examines Cypher, a humane and declarative query language for graphs.

-

Erik Meijer on Big Data, Types of Data Stores and Reactive Programming

Erik Meijer explains the various aspects needed to categorise data stores, how reactive programming fits in with databases, the return to data transformation, denotational semantics, and much more.