InfoQ Homepage Hadoop Content on InfoQ

-

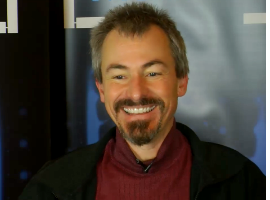

Chris Mattmann on Big Data Infrastructure for Scientific Data Processing

Chris Mattmann explains the type and magnitude of data produced in scientific projects like the Square Kilometer Array Telescope, the tools to use for scientific data processing and much more.

-

Eva Andreasson on Hadoop and Java 8

Eva Andreasson speaks to Charles Humble about how Apache Hadoop works and how developers and BI teams in traditional enterprises can start to use it in their organisations, how garbage collection impacts Hadoop jobs, and what she is interested in in Java 8.

-

Nathan Marz on Storm, Immutability in the Lambda Architecture, Clojure

Nathan Marz explains the ideas behind the Lambda Architecture and how it combines the strengths of both batch and realtime processing as well as immutability. Also: Storm, Clojure, and much more.

-

Jeremy Pollack of Ancestry.com on Test-driven Development and More

Hadoop, the distributive file system and MapReduce are just a few of the topics covered in this interview recorded live at QCon San Francisco 2013. Industry-standard Agile implementation and a lot of testing, assures the development team at Ancestry.com that they have an app that can handle the large traffic demands of the popular genealogy site.

-

Cliff Click on In-Memory Processing, 0xdata H20, Efficient Low Latency Java and GCs

Cliff Click explains 0xdata's H20, a clustering and in-memory math and statistics solution (available for Hadoop and standalone), writing H20's memory representation and compression in Java, low latency Java vs GCs, and much more.

-

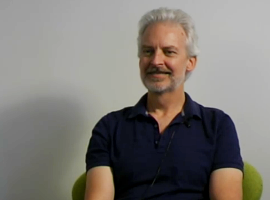

Dean Wampler on Scalding, NoSQL, Scala, Functional Programming and Big Data

Dean Wampler explains Scalding and the other Hadoop support libraries, the return of SQL, how (big) data is the killer application for functional programming, Java 8 vs Scala, and much more.

-

Eva Andreasson on Hadoop, the Hadoop Ecosystem, Impala

Eva Andreasson explains the various Hadoop technologies and how they interact, real-time queries with Impala, the Hadoop ecosystem including Hue, Oozie, YARN, and much more.

-

Eli Collins on Hadoop

Eli Collins discusses Cloudera's CDH4 release, which tasks are well suited for Hadoop, Hadoop and MapReduce vs SQL, the state of Hadoop, and much more.

-

Big Data Architecture at LinkedIn

In this interview at QCon London, LinkedIn’s Sid Anand discusses the problems they face when serving high-traffic, high-volume data. Sid explains how they’re moving some use cases from Oracle to gain headroom, and lifts the hood on their open source search and data replication projects, including Kafka, Voldemort, Espresso and Databus.

-

Optimizing for Big Data at Facebook

Hive co-creator Ashish Thusoo describes the Big Data challenges Facebook faced and presents solutions in 2 areas: Reduction in the data footprint and CPU utilization. Generating 300 to 400 terabytes per day, they store RC files as blocks, but store as columns within a block to get better compression. He also talks about the current Big Data ecosystem and trends for companies going forward.

-

All things Hadoop

In this interview Ted Dunning talk about Hadoop, its current usage and its future. He explains the reasons for Hadoop's success and make recommendations on how to start using it.

-

Costin Leau on Spring Data, Spring Hadoop and Data Grid Patterns

In this interview recorded at JavaOne 2011 Conference, Spring Hadoop project lead Costin Leau talks about the current state and upcoming features of Spring Data and Spring Hadoop projects. He also talks about the Caching and Data Grid architecture patterns.