The RealWorld-based benchmark comparing the implementation by 18 front-end frameworks of a non-trivial full-stack application code-named Conduit recently updated its results. 13 of the 18 frameworks obtain a top-tier LightHouse performance score (i.e. over 90 for a maximum score of 100). Among the 18 frameworks, Svelte, Stencil, AppRun, Dojo, HyperApp and Elm exhibit the lowest payload transferred over the network (< 30 KB).

The RealWorld-based benchmark compares 18 implementations of the RealWorld's Conduit application. The Conduit application, self-described as 'the mother of all demo apps' is a full-stack Medium.com clone with a set of API specs and features whose complexity seeks to mimic that of real-world full-stack applications. Eric Simons, a core maintainer for the RealWorld project, explains:

It’s like TodoMVC, but for full-stack apps! (...) RealWorld shows you how the exact same real world blogging platform is built using React/Angular/& more on top of Node/Django/& more. Yes, you can mix and match them, because they all adhere to the same API spec.

The RealWorld-based benchmark, which started in 2017, recently updated its evaluation of the Conduit implementations by 18 front-end frameworks. The 2019 edition of the benchmark ranks frameworks on three criteria: performance, size, and lines of code.

The performance score is evaluated by LightHouse, a popular open-source, automated tool for improving the quality of web pages. LightHouse has audits for performance, accessibility, progressive web apps, and more. LightHouse's performance score is evaluated on six weighted metrics, presented here in decreasing order of importance:

- Time to Interactive: measures how long it takes a page to become interactive.

- Speed Index: indicates how quickly the contents of a page are visibly populated. The lower the score, the better.

- First Contentful Paint: measures the time from navigation to the time when the browser renders the first bit of content from the DOM.

- First CPU Idle: measures when a page is minimally interactive (most, but maybe not all, user interface elements on the screen are interactive, and the page responds, on average, to most user input in a reasonable amount of time).

- First Meaningful Paint: measures when a user perceives that the primary content of a page is visible.

- Estimated Input Latency

LightHouse performance scoring distinguishes three scoring groups. The top-tier, with a LightHouse performance score between 90 and 100, identifies the top-performing sites. According to the RealWorld-based benchmark, most (13 out of 18) Conduit implementations belong to the top-tier category. The top 13 front-end frameworks are a mix of established front-end frameworks (like Elm, Dojo, Vue, Angular, Aurelia, Stencil, Svelte, and React), minimalistic front-end frameworks (like AppRun, Hyperapp), marginally used front-end frameworks (such as Crizmas, or reframe), and a compile-to-javascript framework (Imba).

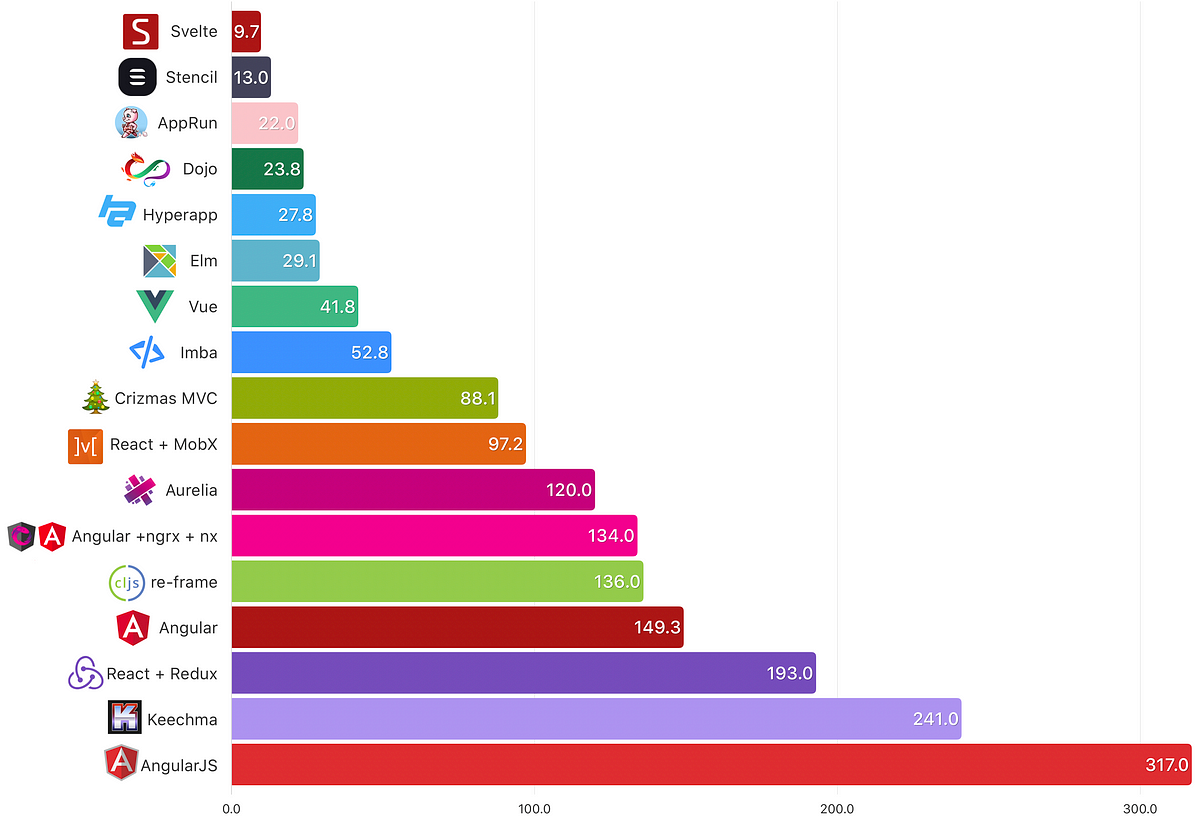

The 18 Conduit implementations are also ranked according to size. The benchmark's author details the rationale behind the size criteria and its computation method:

Transfer size is from the Chrome network tab. GZIPed response headers plus the response body, as delivered by the server. (...) The smaller the file, the faster the download, and less to parse.

Six frameworks (Svelte, Stencil, AppRun, Dojo, HyperApp, and Elm), already figuring in the list of the 13 top-performing frameworks, are in the size top tier (< 30 KB):

Your correspondent identified the top-tier by applying a k-means clustering algorithm to partition the 18 size observations into five clusters.

The frameworks characteristics may explain the small size transferred over the wire:

- Svelte, self-described as 'the magical disappearing UI framework', compiles away its API to optimized JavaScript

- Stencil touts a 6KB runtime and compilation to Web Components

- AppRun and HyperApp have a minimal footprint (respectively 3KB and 1KB)

- Dojo recent versions feature automatic code splitting and optimize for the PRPL performance pattern

- Elm 0.19 touts optimized asset size

The abundance of front-end frameworks have led to the popularity of benchmarks aiming at comparing frameworks in meaningful ways. Framework ranking among benchmarks may vary widely, depending on what is being measured, benchmark methodology and relevance, and the scoring algorithm. The choice of a specific front-end framework should remain a holistic decision integrating quantitative and qualitative variables.