Recently, Google announced A2 Virtual Machines (VMs)' general availability based on the NVIDIA Ampere A100 Tensor Core GPUs in Compute Engine. According to the company, the A2 VMs will allow customers to run their NVIDIA CUDA-enabled machine learning (ML) and high-performance computing (HPC) scale-out and scale-up workloads efficiently at a lower cost.

Google first introduced the Computing Engine A2 VMs in July last year for customers looking for high-performance compute for their machine learning workloads. The VMs are a part of Google's predefined and custom VMs ranging from compute- to accelerator-optimized machines. Now the A2 VMs are generally available ready for High-Performance Compute (HPC) applications such as CFD simulations with Altair ultraFluidX.

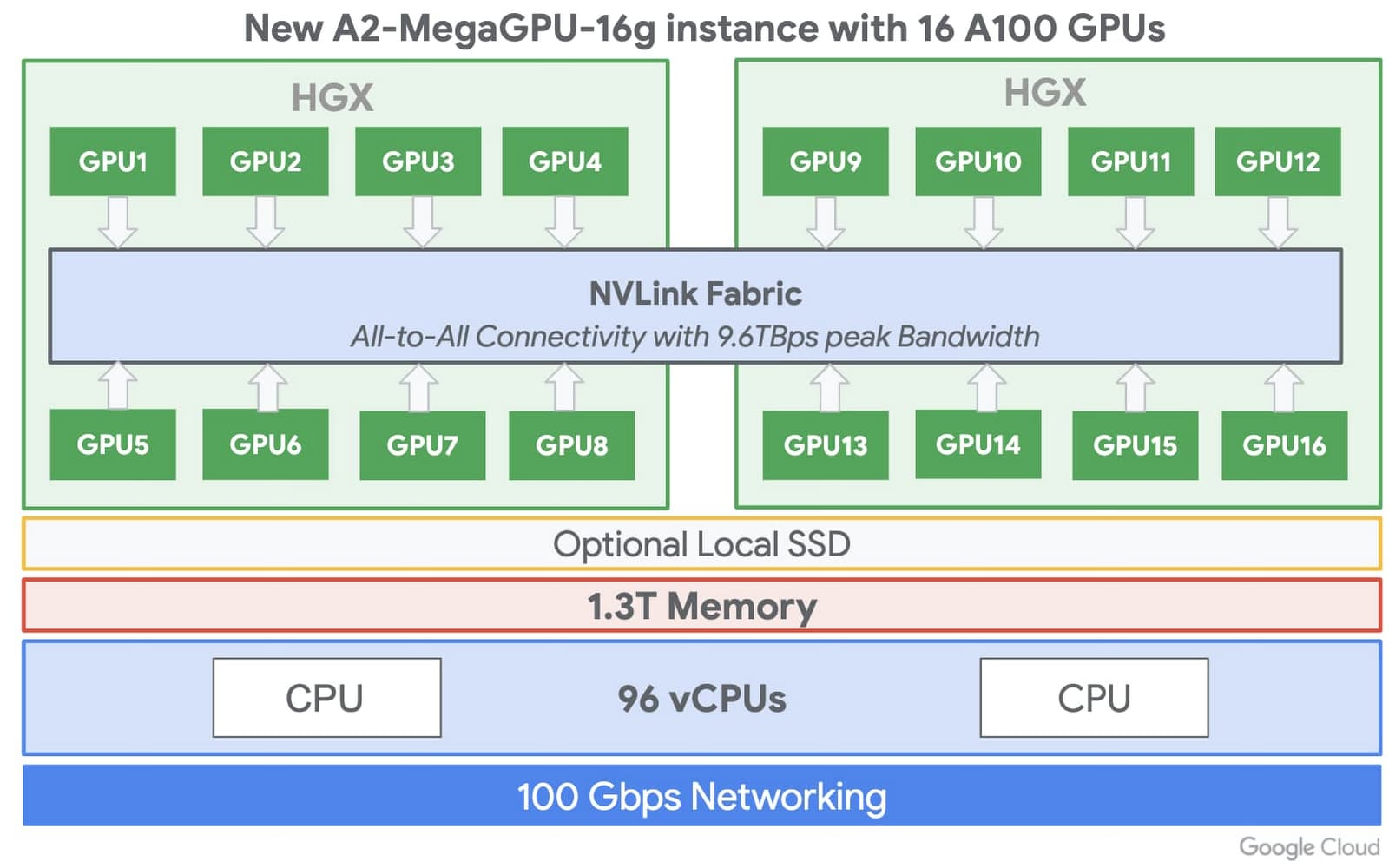

With the A2 VMs, customers can choose up to 16 NVIDIA A100 GPUs in a single VM apart from smaller GPU configurations (1, 2, 4, and 8 GPUs per VM) – providing them flexibility and choice to scale workloads. Moreover, all configurations can be on one VM, and there is no need to configure multiple VMs for a single-node ML training.

Source: https://cloud.google.com/blog/products/compute/a2-vms-with-nvidia-a100-gpus-are-ga

The A2 VMs are also available as Deep Learning VM images, including drivers, NVIDIA CUDA-X AI libraries, and popular AI frameworks like TensorFlow and PyTorch. Furthermore, Google's pre-built TensorFlow Enterprise Images also support A100 optimizations for current and older versions of TensorFlow (1.15, 2.1, and 2.3).

With the A2 family, Google increases its investments in predefined and custom VMs in a market with other public cloud vendors providing similar offerings like Microsoft and AWS. Holger Mueller, principal analyst and vice president at Constellation Research Inc., told InfoQ:

While cloud vendors love to run AI / ML workloads on their proprietary cloud AI platforms, enterprises want to avoid that potential lock-in. It's a significant feat by Nvidia to have Ampere running on all major cloud platforms and giving customers the model portability across the clouds, effectively enabling AI / ML in the multi-cloud - and it is also not surprising that Google is the first from the big three IaaS vendors to embrace the latest Ampere platform - as the #3 Iaas vendor has to move faster than the larger competitors.

Currently, the A2 instances powered by Nvidia's A100 GPUs are available now in its us-central1, asia-southeast1 and europe-west4 regions, with more regions to come online later this year. The A2 VMs are available via on-demand, preemptible, and committed usage discounts. And lastly, the A2 instances are fully supported by Google Kubernetes Engine, Cloud AI Platform, and other services, besides Compute Engine.