Last year Google introduced Cloud TPU Virtual Machines (VMs), which provide direct access to TPU host machines in preview. Today, Cloud TPU VMs are generally available, including the new TPU Embedding API, which can accelerate ML Based ranking and recommendation workloads.

Google optimized the Cloud TPU VMs for large-scale ranking and recommendation workload with the GA releases. And in a Google Cloud blog post, the company claims that the Embedding API can help businesses lower costs associated with ranking and recommendation use-cases that commonly rely on deeply neural network-based algorithms that can be costly.

Vaibhav Singh, outbound product manager, Cloud TPU, and Max Sapoznikov, product manager, Cloud TPU at Google, wrote in a Google Cloud blog post:

Embedding APIs can efficiently handle large amounts of data, such as embedding tables, by automatically sharding across hundreds of Cloud TPU chips in a pod, all connected to one another via the custom-built interconnect.

Furthermore, the TPU VMs GA releases support three key frameworks: TensorFlow, PyTorch, and JAX, available through three optimized environments for ease of setup with the respective framework. A respondent Zak on a hacker news thread on the GA release of Cloud TPU VMs stated:

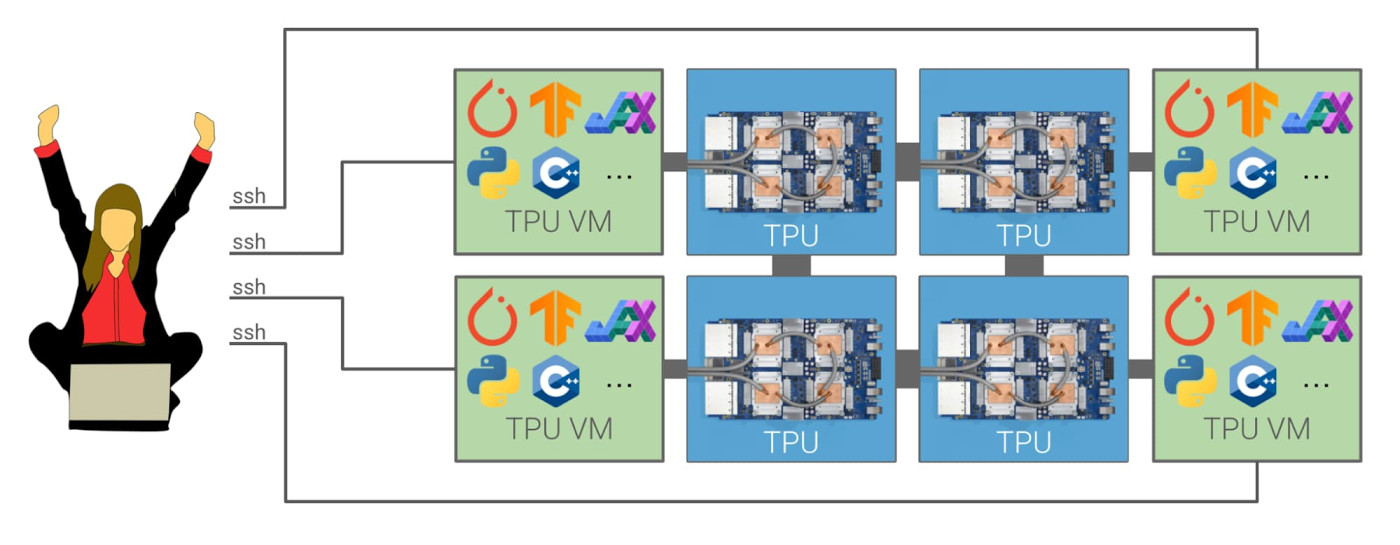

In the previous Cloud TPU architecture, PyTorch and JAX users had to create a separate CPU VM for every remote TPU host and arrange for these CPU hosts to communicate indirectly with the TPU hosts via gRPC. This was cumbersome and made debugging difficult. With TPU VMs, none of this is necessary. Instead, you can SSH directly into each TPU host machine and install arbitrary software on a VM to handle data loading and other tasks with much greater flexibility.

Source: https://cloud.google.com/blog/products/compute/introducing-cloud-tpu-vms

In addition, the TPU VMs also allow input data pipelines to be run directly on the TPU hosts. Users can use this functionality to create their own customer operations, such as TensorFlow Text, and are no longer restricted to the TensorFlow runtime release version. Moreover, local execution on the host with the accelerator also enables use cases such as distributed reinforcement learning.

The same respondent Zak on the Hacker News thread said:

With TPU VMs, workloads that require lots of CPU-TPU communication can now do that communication locally instead of going over the network, which can improve performance.

Currently, the Cloud TPU VMs available in various regions, and pricing details can be found on the pricing page. And lastly, customers can get more information and guidance through the documentation, concepts, quickstarts, and tutorials.