AWS announced the preview release of vector storage and search capability within Amazon OpenSearch Serverless. The capability is intended to support machine learning augmented search experiences and generative AI applications.

Amazon OpenSearch Serverless is the serverless offering of the Amazon OpenSearch Service. Based on the open-source search project Apache Lucene, Amazon OpenSearch enables ingestion, storage, search and analytics across JSON documents.

Historically, search in Lucene-based systems has relied on keyword matching along with a scoring metric to control the relevance of results. Keyword matching works by building an inverted index of terms, linking them to documents in which they appear. Ranking results, on the other hand, works by converting individual documents into a vector within a sparse N-dimensional space, where the weights for each dimension are outputs from the scoring metric and the dimensions are the terms across all considered documents. This approach, often referred to as lexical search, while efficient can exclude documents that semantically answer a query but omit key terms from it. Vector Search is a newer approach that aims to overcome this limitation.

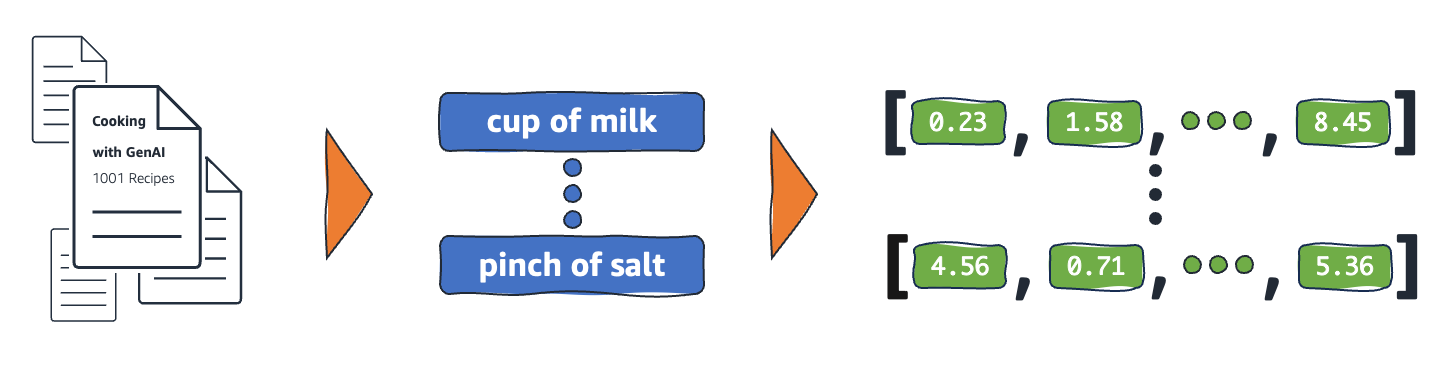

With Vector Search, a query is converted into a dense vector via a transformer model and results are found by identifying documents with similar vector representations.

An illustration of how text might be represented as a vector embedding (Source: AWS News Blog Post)

This requires a storage system that can appropriately index these vectors, referred to as embeddings, and identify similarity by a search for neighboring vectors. This functionality is what the Vector Engine for Amazon OpenSearch Serverless aims to provide.

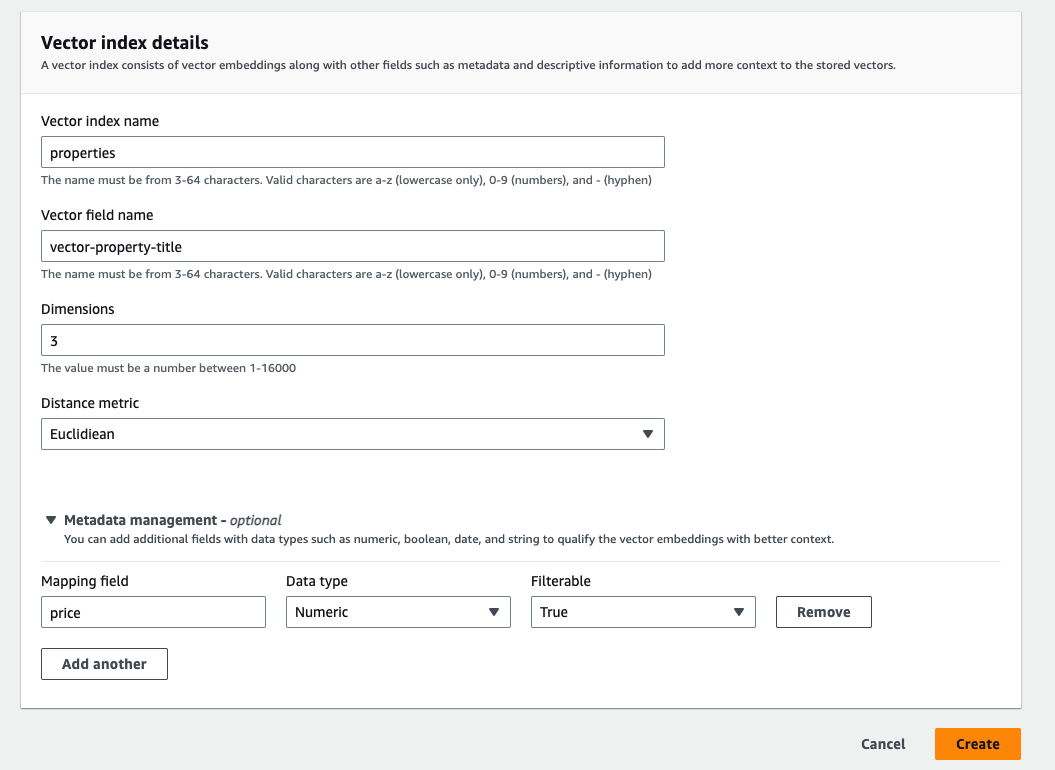

With the new engine, Vector Indexes for embeddings of up to 16,000 dimensions can now be created along with a similarity metric of Euclidean, Cosine or Dot-Product. In addition to the vector field, the new indexes allow each document to have up to 1000 fields as contextual metadata for search filters.

Screenshot of the vector index creation page on the AWS Console (Source: AWS News Blog Post)

By taking advantage of the OpenSearch Serverless architecture, the vector engine removes the need for users to implement sizing, tuning or scaling controls on the underlying infrastructure. It also supports the OpenSearch suite APIs which allows the existing lexical search, filtering, aggregation and geospatial query functionalities to be used in tandem with vector search.

Screenshot of an OpenSearch query combining vector and lexical search approaches (Source: AWS News Blog Post)

A drawback of the tight integration with OpenSearch Serverless is that use of the vector engine is subject to the same minimum requirement of 4 OpenSearch Compute Units (OCUs) as other workloads. Feedback from the community on Amazon Opensearch Serverless has primarily been focused on its relatively high costs, with a user ralusek commenting on the r/aws thread:

" ... it seems as though we're looking at a $700/mo minimum fee . . . for tinkering with projects, this just seems absurdly high . . . "

It would appear the Amazon Opensearch Serverless team has taken onboard the user pain points as the announcement included future plans to have a 1 OCU minimum requirement. The team has also offered 1400 OCU-hours per month for free to enable customer experimentation prior to general availability of the service.

For similar generative AI and machine-learning workloads, alternatives to the Vector Engine for Amazon Opensearch Serverless would be PostgreSQL with the pgvector extension, Elasticsearch Vector Search and Pinecone.

Finally, further information on how to get started with the Vector Engine for Amazon OpenSearch Serverless can be found on its documentation page.